With the advancements in technology, human reliance on computers, laptops, and cell phones, among others has tremendously increased. These non-entities have, without a doubt, elevated the standard of living by providing easier solutions to complicated problems and saving us so much time. But what if this technology can do more than that? What if it can become your friend who is loyal, empathetic, and always there for you? Wouldn’t it surprise you that it is up to you to decide whether this friend of yours is a male or a female? Wouldn’t it be amazing if you can name this friend whatever you want and communicate with it in any language? All of this, despite sounding fascinating, looks like a vision from the future. If you think that is what it is, you are wrong because this ‘friend’ of yours is already here.

‘My AI’

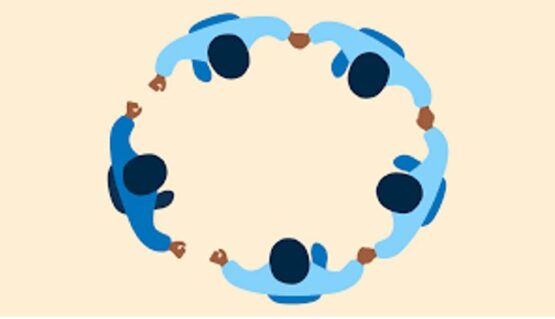

In May 2023, Snapchat introduced a chatbot called ‘My AI’, an abbreviation of the term ‘My Artificial Intelligence’, which approached the users with a simple introductory message. Out of both curiosity and concern, within minutes, Snapchatters from all over the world started responding and this way, a cycle of chats started showing a sharp increase in the time spent on Snapchat by each user pulling the company towards immense profits.

My AI

However, despite a huge message turnout from day 1 of its launch, this ‘My AI’, defined by Snapchat as an ‘experimental, friendly chatbot’, has attracted vast criticism from its users. Serious concerns have been expressed regarding the safety and well-being of its users. Let us look into what those are but first, it is essential to understand the purpose behind the development of My AI.

The Epidemic of loneliness and My AI

The main purpose of My AI has been described as to decrease the highly prevailing loneliness among the youth by providing them with a friend to talk to. According to the research, youngsters between the ages of 18 to 29 are prone to an ‘epidemic of loneliness’. Reasons behind this feeling of being alone are many including financial burdens, relationship issues, or additions of any kind. In these situations, the affected individuals may fall trapped in a constant state of anxiety and panic which, if go unchecked and untreated, can lead to clinical depression.

Social isolation leads to raised mental health concerns

As it is widely said, ‘a shared wound pains less’, people rely on others for support in tough times. But in a world in which life works for you only if you work like a robot, little or no time can be spared for social gatherings, building friendships, and nurturing relationships. Thus, a slow progression of depression continues to happen due to this social isolation which overtakes people in the end. In such cases, artificial intelligence tools come handy as they can act as a friend and support people in many ways.

My AI is an option to start a chat with

Concerns regarding My AI

Since the launch of Snapchat’s chatbot, there have been rising concerns regarding its inadequacies.

- The far-sighted experts as well as some users have predicted that as the chatbot is not fully compatible with the perception, comprehension, and thinking capabilities of the human mind, it can generate incorrect and biased answers to some of the asked questions which will have total unacceptance by the Snapchat users. Moreover, harmful and misleading content can also be engendered by the chatbot thus provoking discriminative feelings among the population. Snapchat itself claims that although ‘violent, hateful, sexually explicit, or otherwise dangerous’ texts have been barred from being displaced by My AI, ‘it may not always be successful’. A healthy initiative by Snapchat is that it allows the users to ‘report a chat’ received from My AI and give a reason why they are doing so.

Say no to violence and the text which provokes it

- Another voiced concern regarding this new Snapchat feature is that it often wants to know the user’s location which can be considered as a breach of someone’s privacy. Although Snap has remarked on the ‘importance of its user’s privacy’ to the company by claiming that the company only asks for the data which is necessary and does not press the consumers to share what they do not want to.

- Another concern related to the choice of whether one wants to use My AI or not is of extreme significance. When it comes to removing the chatbot from your chats, it is a service only for paid subscribers. However, for unpaid users, the feature cannot be removed.

Envisioned effects on mental health

The effect of AI on a person’s mental health, although initially appreciative in some ways, is deteriorating in later stages. At first, it is like a friend to whom you are voicing your issues, your feelings of being alone, or whatever is bothering you. But as time passes, the user becomes more and more conscious about the robotic nature of this friendship which resultantly increases anxiety, social isolation, as well as depression. These repercussions are even more detrimental to mental health than the loneliness itself.

Detrimental effects of social media on mental health

Conclusion

The technology and its visible effects around us seem like are here to stay forever. The nature of the use of technology as well as the control over it is, up to an extent, dependent on the user. Thus it is in your hands to enjoy the novel technological evolutions but not to fall prey to their menace. However, when it comes to My AI, as everything has both good and bad effects, time will tell if the use of artificial intelligence as a ‘friend’ proves to be a blessing or a menace to the mental health of humans.

PhD Scholar (Pharmaceutics), MPhil (Pharmaceutics), Pharm D, B. Sc.

Uzma Zafar is a dedicated and highly motivated pharmaceutical professional currently pursuing her PhD in Pharmaceutics at the Punjab University College of Pharmacy, University of the Punjab. With a comprehensive academic and research background, Uzma has consistently excelled in her studies, securing first division throughout her educational journey.

Uzma’s passion for the pharmaceutical field is evident from her active engagement during her Doctor of Pharmacy (Pharm.D) program, where she not only mastered industrial techniques and clinical case studies but also delved into marketing strategies and management skills.

Throughout her career, Uzma has actively contributed to the pharmaceutical sciences, with specific research on suspension formulation and Hepatitis C risk factors and side effects. Additionally, Uzma has lent her expertise to review and fact-check articles for the Health Supply 770 blog, ensuring the accuracy and reliability of the information presented.

As she continues her PhD, expected to complete in 2025, Uzma is eager to contribute further to the field by combining her deep knowledge of pharmaceutics with real-world applications to meet global professional standards and challenges.